As we know the Turing test has so many weaknesses. The machines that pass the Turing test display tricks rather than bona fide intelligence. And that’s not Turing’s intention. So several thinkers put forward some ideas on how to improve or replace the Turing test. Let us discuss some of the alternative methods.

A professor of Computer Science at the University of Toronto Mr. Hector Levesque introduced a superior alternative to the Turing test called Winograd Schema Challenge(WSC). This test was named after the computer Scientist Terry Winograd. The test was introduced as a remedy for the major flaw of the Turing test that machines can easily pass the test with tricks rather than true intelligence. The performance of the chatbot named Eugene Goostman exposes some of the flaws of the Turing test and Levesque evaluated those problems and summarized them as

The key factor of this method that differentiates it from the Turing test is the special format of the question. It is a test with multiple-choice questions in a specific format. Levesque argued that a machine must have to use knowledge and commonsense reasoning to pass this test and be announced as intelligent. The test aimed to test the ability to understand the deeper meaning of ambiguous sentences.

Let's have an example:

Question- The shirt would not fit Mr.X because the shirt was too big(small). What was too big(small)?

Answer 0: The shirt

Answer 1: Mr.X

If the question is posed with the word “big” the answer is “0: The shirt”. If the question is posed with the word ”small” then the answer is “1: Mr.X”.

The above question is pretty simple for a human but for a computer it must know the size of objects, interpersonal reasoning, and some common sense to answer this question.

Advantages:

The main difficulty with the Winograd Schema Challenge is that the questions must be developed carefully to ensure the requirement of commonsense reasoning to answer.

Gary Marcus, NYU cognitive scientist argued that the winner of the Turing test isn’t genuinely intelligent. The current format of the Turing test is hardly criticized by Marcus. He recently developed a workshop on the importance of “Thinking beyond the Turing test.” Many experts are chained together with some interesting ideas. Marcus himself devised an alternative and that’s what we called Marcus Test. Marcus said that Goostman and ELIZA mainly rely on pattern recognition; they don’t have any genuine understanding. From his experience of two decades as a cognitive scientist, he proposes a Turing test for the twenty-first century. He suggested creating a computer program that can watch any TV program or any youtube video and answer questions based on the contents of the watched program. Goostman can only hold those types of questions for a short period and that is only through faking. Marcus argued that there is no program existing that can come close to the action that any bright teenager can do.

His idea is, If a computer can detect and understand humor, mockery and can explain it in a meaningful way, then there is some cogitation ability for the computer.

The test was proposed by Selmer Bringsjord and colleagues in 2001 as a remedy to overcome the major flaw of the Turing Test. The test was based on creativity. The name was given after as an honor to the lady Ada Lovelace who is considered as the first computer programmer. The test is to check whether an artificial agent could create some output in a way that the developer cannot even explain. To pass the Lovelace Test, the artificial agent (a) programmed by a human programmer (h) would have to generate an output (o) that the human programmer himself can’t explain.

To pass the test, The artificial agent has to create a memento from the genres considered to require some human-level intelligence. The memento created must compromise certain criteria given by a human evaluator. The human evaluator has to determine whether the memento is a valid representation of the genres with the demanded criteria. The evaluator also has to ensure that the demanded criteria and the genres are not an impossible standard.

The test was recently upgraded by Georgia Tech professor Mark Riedl

The main flaw of the Turing Test is that it focuses only on verbal behavior. To overcome this fault, Charlie Ortiz came up with an effort to create a physically incorporated version of the Turing Test formerly known as the Ikea Challenge. Ortiz included two main elements of intelligent behavior: perception and physical action to be tested.

In the construction challenge, there will be a competition among the robots that can build physical structures like furniture. To do this, the robot has to process the verbal instructions about the model to be constructed, handle physical components to create the aimed model, recognize the structure in every stage of construction to answer questions or provide explanations. On the other hand, the same model is constructed using human agents. There is a track to investigate the grasping of commonsense knowledge about physical models.

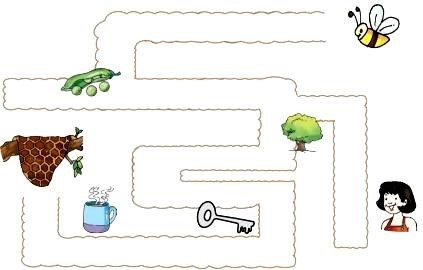

Michael Barclay and Antony Galton have developed a test to test the machine’s visual ability.

Look at the picture. What are the things that the bee met on the way to its home?

A simple question was asked to both humans and the software. “What are the things that the bee met on the way to its home?” The given multiple choices are all correct technically. Some people might choose “Pee, Tree, Key, Tea”, while others will choose “Pee, Tree, Key, Cup”. But when relaying the words that rhyme with bee, not both of the options are useful. Making the correct choice requires several hints and judgments including the relative sounds, flow, and relevance in a particular situation. Humans can handle it more correctly but machines fail.

This kind of human intelligence on the visual description is selected by Barclay and his colleagues to evaluate a machine’s intelligence. This inspired the creation of devices that can interact with the surroundings like humans.

To prove that a machine is intelligent we need more than behavioral tests. We need to prove that the machine possesses some machine equivalent of a human brain. For achieving this we have to identify the machine equivalents of the Neural Correlates of Consciousness (NCC). NCCs in the human brain remains a mystery to neuroscientists. As an alternative to the Turing Test, this idea is now kept aside but it is a potential pathway towards the development of an artificial brain and artificial Artificial Consciousness.

The AI researcher Ben Goertzel put forward an interesting testing method called the coffee test. As a part of the test, the AI application has to go to any kitchen and find the ingredients needed to make a coffee and then have to make a super coffee. Making a cup of coffee sounds very easy but that is only for humans. But for a machine identifying the ingredients and mixing them in the right amount is hard and it has to represent its intelligence.

As in the name itself the test is about how to enroll an AI in a college and get a degree using the resources the same as the other students enrolled for the same degree are getting. This test was proposed by Ben Goertzel. Bina48 was the first AI to complete a college class.

AI researcher Nils J. Nilsson proposed an idea to replace the Turing test with an alternative testing method called the “employment test”. He aimed to go through the most common human activity ‘jobs’. To pass this test the AI program must be able to perform jobs that are performed by humans.

All this being discussed is the possible alternatives to the Turing Test to overcome its flaws but still, some experts believe that the Turing Test itself is not with this type of limitation it all depends on how the test is conducted and judged. If a full Turing Test is conducted properly then it can do the job as Turing predicted.