In this Artificial intelligence tutorial, we are aiming to learn

The term "environment '' is quite well known to everyone. As per definition from oxford dictionary: "environment is the surroundings or conditions in which a person, animal, or plant lives or operates." But when it comes to computing, it is the surrounding where a computing device works or operates. In the context of Artificial intelligence, an Environment is simply the surrounding of an agent and is where the agent operates. To know more about agents please visit our previous tutorial- Agents in AI.

Now, let's consider a real-life example of driving a car on the road. Can you guess who will be the agent and environment? Yes,

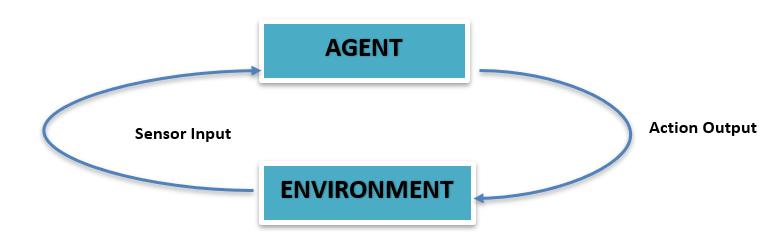

Similarly in AI, we have an environment that contains an agent, a sensor, and an actuator.

The above figure shows the simplest diagrammatic representation of an agent-environment interaction. The agent is within the environment. There are sensors to sense the environment and they provide sensory inputs to the agent. The agent then takes actions for the respective inputs and provides the output back to the environment.

For AI, The problem which is to be solved itself creates a great challenge. Understanding the given problem itself is a challenging task for AI. And apart from reasoning, the most challenging aspect of an AI problem is the environment.

Agent and Environment can be said as two hooks where AI is hanging. Or more simply the environment is considered as the problem then the agent is the solution for the problem or ‘agent’ is the game played on the ground ‘environment’.

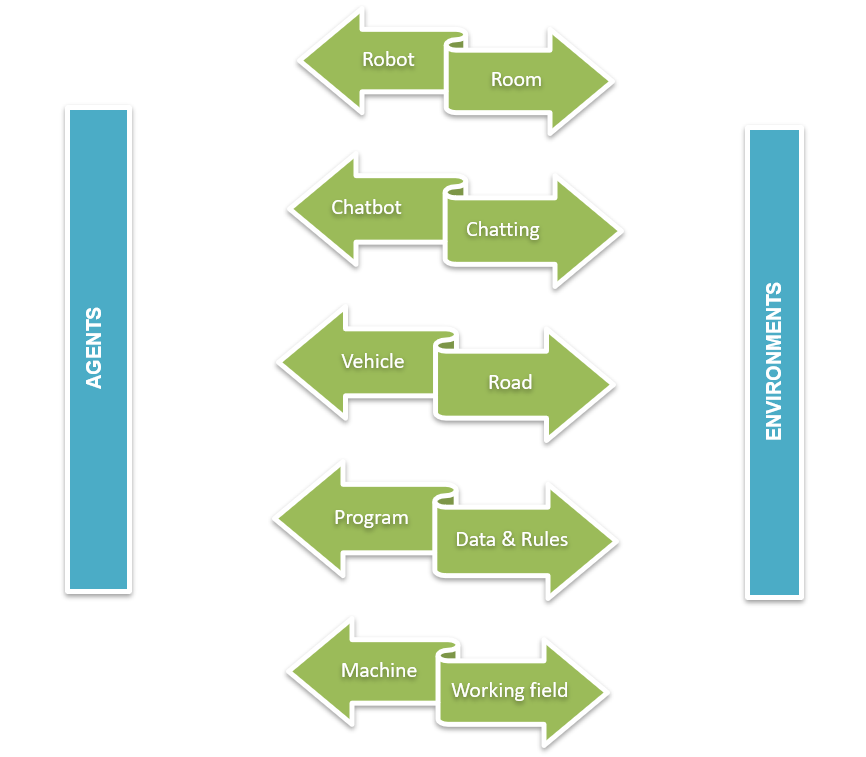

Some examples for agents and their environments are shown above for a clear understanding. For a given task of driving, the vehicle is the agent and the road is the environment to drive on. The sensor devices like cameras, radar, lidar, etc will collect information about the road like the presence of pedestrians, the number of other vehicles present on the road about the traffic signal, etc. The vehicle will then act concerning that information like whether the brake pedal or the accelerator pedal has to be pushed or have to take a turn etc.

If a machine is an agent then its working place is its environment. If we consider a Cooling System as the agent then the industry it works for is the environment. The coolant temperature sensor will then collect information and the machine will act upon that information.

AI can also be called the study of rational agents and their environments. From this statement itself, it is clear how important an agent and agent environment is for AI. The working of an AI system can be stated in the least words. Through different types of sensors, the agent will sense the environment and through actuators, the agent will act on their environment. The structure of an agent environment will affect the entire AI system itself.

Agents do not have full control over their environment but have partial control. This means the environment is influenced by the agent. But the agent’s performance is directly influenced by the changes in the environment. I.e. an agent performing the same task in two different environments will result entirely in two different outcomes. An agent is preprogrammed with a set of abilities that it can apply in different situations it may face in its environment. These preprogrammed abilities are called effectoric capabilities. The sensors of the agents are strictly influenced by the environment. The agent uses its effectoric capabilities and the information from the sensor to perform decision making. Every action is not suited for every situation. The precondition must be suitable to perform the particular task. For example, the agent has to open a door and it can do so. But it can be performed only if the door is unlocked (precondition). So, deciding the action to perform at a given instance to achieve the goal or at least work towards it is the biggest problem of an agent in an environment. The properties of an environment directly affect the complexity in the process of decision-making.

The agent environment in artificial Intelligence is classified into different types. The environment is categorized based on how the agent is dealing with it.

Classification is as follows:

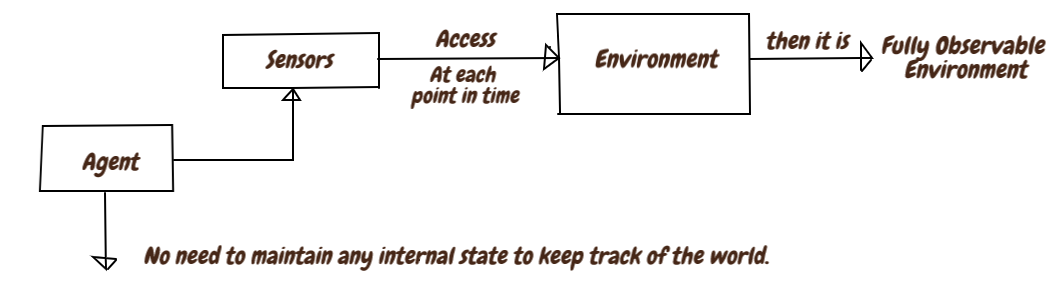

As with the name itself the environment of the agent is observed all the time. At each point in time, the complete state of the environment is sensed or accessed by the sensor. This type of completely observed environment is called fully observable and else if it is not sensed or observed continuously with varying time then it is partially observable. An environment that is not at all observed or accessed by any sensor for any time is called an unobservable environment. Since it doesn't need to have a track over the world, a fully observable environment is more convenient.

In real life, Chess is an example of fully observable because each player of the chess game gets to see the whole board. Another example of a fully observable environment is the road, While driving a car on the road (environment ), the driver (agent) can see all the traffic signals, conditions, and pedestrians on the road at a given time and drive accordingly.

A card game can be considered as an example of a partially observable. Here some of the cards are discarded into a pile face down. The user is only able to see his cards. The used cards, cards reserved for the future, are not visible to the user.

An environment that remains always unchanged by the action of the agent is called a static environment. A static environment is the simplest one which is easy to deal with since the agent doesn’t need to keep track of the world during an action. But an environment is said to be dynamic if it changes by the action of the agent. A dynamic environment keeps constantly changing. An environment that keeps constant with time and the performance score of the agent will change with time is called a semi-dynamic environment.

The crossword puzzle can be considered as an example of a Static environment since the problem in the crossword puzzle is set paused at the beginning Crossword puzzle, the environment remains constant and the environment doesn't expand or shrink it remains the same.

For a Dynamic environment, we can consider a roller coaster ride as an example. The environment keeps changing for every instant as it is set in motion. The height, mass, velocity, different energies(kinetic, potential), centripetal force, etc will vary from time to time.

An environment with a finite number of possibilities is called a discrete environment. For a discrete environment, there is a finite number of actions or percepts to be performed to reach the final goal. For a continuous environment, the number of percepts remains unknown and continuous.

In a chess game, the possible movements for each piece are finite. Like the king can move only one square in any direction until that square is not attacked by an opponent piece. So the possible movements for the particular piece are fixed and it can be considered as an example for a Discrete environment But the number of movements will vary for each game.

Self-driving cars are an example of a continuous environment. The surroundings change over time, traffic rush, speed of other vehicles on the road, etc will vary continuously over time.

An environment that ignores uncertainty is called a deterministic environment. For a deterministic environment, the upcoming condition or state can be determined by the present condition or state of the environment and the present action of the agent or the action selected by the agent. An environment with a random nature is called a stochastic environment. The upcoming state cannot be determined by the current state or by the agent. Most of the real-world Ai applications are classified under stochastic type. An environment is stochastic only if it is partially observable.

For each piece on the chessboard, the present position of them can set the next coming action. There is no case of uncertainty. Which all steps can be taken by a piece from the present position can be determined and so, it can be grouped under a deterministic environment.

But for a self-driving car, the coming actions can’t be determined in the present state because the environment is varying continuously. Maybe the car has to push the brake or maybe push the accelerator fully depending on the environment at that time. actions cannot be determined and is an example of a stochastic environment.

An environment that consists of only a single agent is called a single-agent environment. All the operations over the environment are performed and controlled by this agent only. If the environment consists of more than one agent or multiple agents for conducting the operations, then such an environment is called a multiple agent environment.

In a vacuum cleaning environment, the vacuum cleaner is the only agent involved in the environment. And it can be considered as an example of a single-agent environment.

Multi-Agent Systems, computer-based environments with multiple interacting agents are the best example of a multi-agent environment. Computer games are the common MAS application. Biological agents, Robotic agents, computational agents, software agents, etc are some of the agents sharing the environment in a computer game.

An environment with a series of actions where the current action of an agent will not make any influence on future action. It is also called the non-sequential environment/Episodic environment. Sequential or non-episodic environments are where the current action of the agent will affect the future action.

For a classification task, the agent will receive the information from the environment concerning the time, and actions are performed only on those pieces of information. The current action doesn't have any influence on the future one, so it can be grouped under an episodic environment.

But for a chess game, the current action of a particular piece can influence the future action. If the coin takes a step forward now, the next coming actions depend on this action where to move. And it is sequential.

Known & unknown is an agent’s state rather than the property of an environment. If all the possible results of all the actions are known to the agent then it is a known environment. And if the agent is not aware of the result of the actions and it needs to learn about the environment to make decisions, it is called an unknown environment.

If the sensors of the agent can have complete access to the state of the environment or the agent can access complete information about the environmental state then it is called an accessible environment. Else it is inaccessible or the agent doesn’t have complete access to the environmental state.

| Task Environment | Observable | Determines | Episodic | Static | Discrete | Agent |

|---|---|---|---|---|---|---|

|

Crossword Puzzle |

Fully |

Determines |

Sequential |

Static |

Discrete |

Single |

|

Taxi driving |

Partially |

Stochastic |

Sequential |

Dynamic |

Con |

Multi |

|

Medical Diagnosis |

Partially |

Stochastic |

Sequential |

Dynamic |

Con |

Single |

|

Image Analysis |

Fully |

Deterministic |

Episodic |

Semi |

Con |

Single |

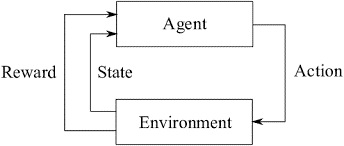

Interaction between the agent and the environment is a time concerning the procedure. For each time step, the agent collects information about the representation of the environment state. Based on this information the agent will select an action from the available actions for that state. After a time step later the agent will receive a numerical reward as a result of the particular action and set itself into a new state. This interaction is a continuous process, the action is selected by the agent and the environment will respond to those actions and present new situations to the agent.

A typical learning structure of AI is shown above.

Learning techniques are used to solve the given task by AI. The diagram explains how the agent interacts with the environment. As the agent acts, the state of the environment gets changed and lets the agent know about the change and also receives a reward. This is continuous till the goal.