In this tutorial, we are going to discuss agents in Artificial Intelligence. An AI system contains an agent and its environment. An intelligent agent is a software entity that enables artificial intelligence to take action. Intelligent agent senses the environment and uses actuators to initiate action and conducts operations in the place of users. Or simply an Intelligent Agent (IA) is an entity that makes decisions.

Anything that recognizes the environment through sensors and acts upon the environment initiated through actuators is called AGENTS. An agent performs the tasks of recognition, thinking, and acting cyclically. An agent can be:

An intelligent agent is an agent that can perform specific, predictable, and repetitive tasks for applications with some level of individualism. These agents can learn while performing tasks. These agents are with some human mental properties like knowledge, belief, intention, etc. A thermostat, Alexa, and Siri are examples of intelligent agents.

The main functions of intelligent agents are

Rule 1: Must have the ability to recognize the environment.

Rule 2: Decisions are made from observations.

Rule 3: Decision should result in actions.

Rule 4: The action must be rational.

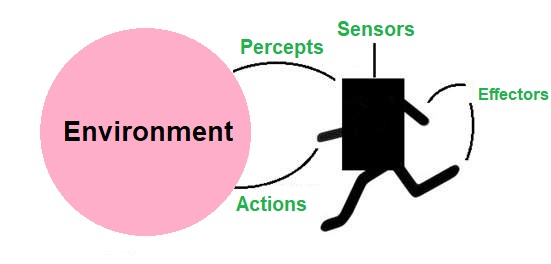

Sensors, actuators, and effectors are the three main components the intelligent agents work through. Before moving into a detailed discussion, we should first know about sensors, effectors, and actuators.

Sensor: A device that detects environmental changes and sends the information to other devices. An agent observes the environment through sensors. E.g.: Camera, GPS, radar.

Actuators: Machine components that convert energy into motion. Actuators are responsible for moving and controlling a system. E.g.: electric motor, gears, rails, etc.

Effectors: Devices that affect the environment. E.g.: wheels, and display screen.

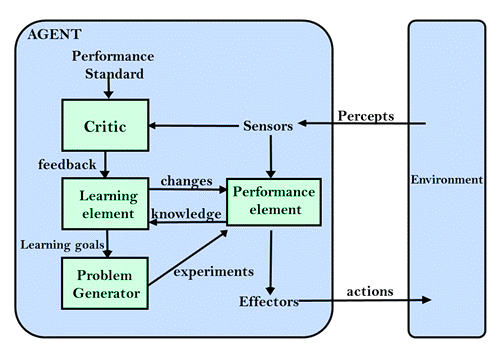

The above diagram shows how these components are positioned in the AI system.

Percepts or inputs from the environment are received through sensors by the intelligent agent. Using this acquired information or observations this agent uses artificial intelligence to make decisions. Actuators will then trigger actions. Percept history and past actions will influence future decisions.

The structure of an intelligent agent can be viewed as the combination of architecture and agent program

IA structure consists of three main parts:

F: P* → A = the agent program runs on the physical architecture to produce function f

simple agent program can be defined as an agent function that maps every possible percept to a possible action that an agent can perform.

Now we got a clear picture of what an intelligent agent is. For artificial intelligence, the actions based on logic(rational) are much important because the agent gets a positive reward for each best possible action and a negative reward for each wrong action. Now take a look at rationality and rational agents.

An ideal rational agent is an agent that can perform in the best possible action and maximize the performance measure. The actions from the alternatives are selected based on:

The actions of the rational agent make the agent most successful in the percept sequence given. The highest performing agents are rational agents.

Rationality defines the level of being reasonable, sensible, and having good judgment sense. It is concerned with actions and results depending on what the agent has recognized. Rationality is measured based on the following:

It is a type of model on which an AI agent works on. It is used to group similar agents. Environment, actuators, and sensors of the respective agent are considered to make performance measure by PEAS.

PEAS stands for Performance Measure, Environment, Actuator, and Sensor.

Example of Agents with their PEAS representation

| Agent | Performance Measure | Environment | Actuators | Sensors |

|---|---|---|---|---|

|

Vacuum Cleaner |

Cleanness Efficiency Battery Life Security |

Room Table Wood floor Carpet Various Obstacles |

Wheels Brushes Vacuum Extractor |

Camera Dirt detection sensor Cliff sensor Bump sensor Infrared wall sensor |

|

Automated Car Drive |

Comfortable trip Safety Maximum Distance |

Roads Traffic Vehicles |

Steering wheel Accelerator Brake Mirror |

Camera GPS Odometer |

|

Hospital Management System |

Patient’s health Admission process Payment |

Hospital Doctors Patients |

Prescription Diagnosis Scan report |

Symptoms Patient’s response |

Based on the capabilities and level of perceived intelligence intelligent agents can be grouped into five main categories.

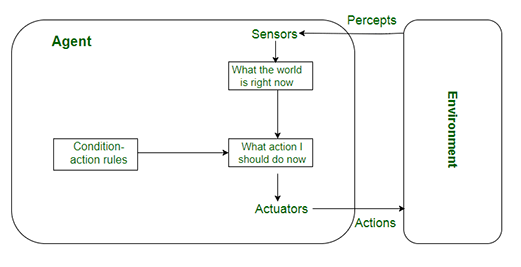

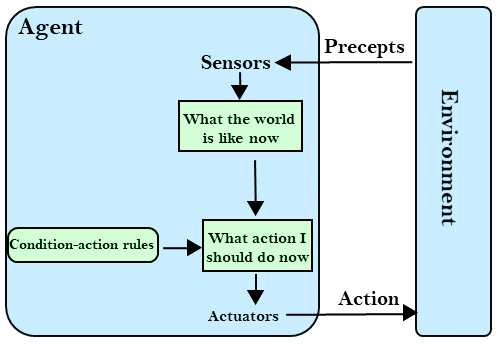

The current percept is used rather than the percept history to act by these agents. The basis for the agent function is the condition-action rule. The Condition-action rule is a rule that maps a condition to an action. (e.g.: a room cleaner agent works only if there is dirt in the room). The environment is fully observable and a fully observable environment is ideal for the success of the agent function. The challenges to the design approach of the simple reflex agent are:

Very limited intelligence

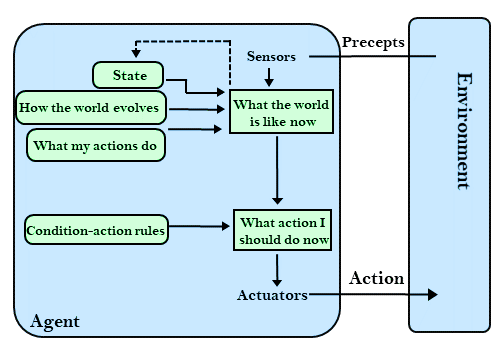

The percept history is considered by Model-based reflex agents in their actions. These agents can still work well in an environment that is not fully observable. They use a model of the world to choose respective actions and they maintain an internal state.

Model – understand How things are happening in the world, so it is called a model-based agent.

Internal State – The unnoticed features of the current state is represented with the percept history.

Updating the agent state requires the information about

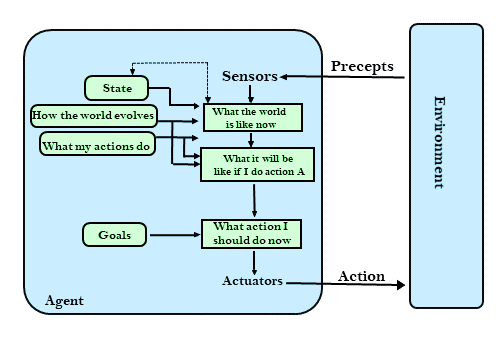

For describing capabilities, goal-based agents use goal information. These agents have higher capabilities than model-based reflex agents since the knowledge supporting a decision is explicitly modeled and thereby modifications are allowed. For these agents, the knowledge about the current state environment is not sufficient to decide what to do. The goal must describe the desirable situations. The agent needs to know about this goal. The agents choose actions to achieve the goal. Before deciding whether the goal is achieved or not these agents may have to consider a long sequence of possible actions. Goal − description of desirable situations

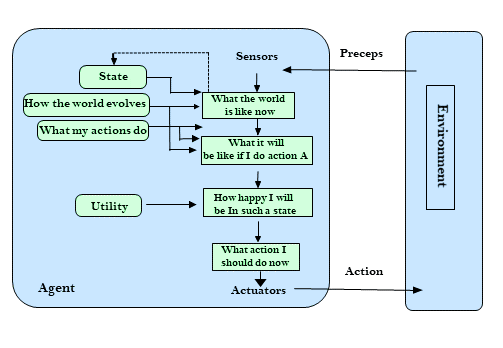

Choices made by these agents are based on utility. Extra components of utility measurement made them more advanced than goal-based agents. They act based not only on goals but also on the best way to achieve the goal. The utility-based agents are useful when the agent has to perform the best action from multiple alternatives. The efficiency of each action to achieve the goal is checked by mapping each state to a real number.

Agents with the capability of learning from their previous experience are learning agents. They start to act with their basic knowledge and then through learning they can act and adapt automatically. Learning agents can learn, analyze the performance, and improve the performance. Learning agents have the following conceptual components:

In many real-life situations, intelligent agents in artificial intelligence have been applied.

Through the search of information using search engines, intelligent agents enhance the access and navigation of information. Intelligent agents perform the task of searching for a specific data object on behalf of users within a short time.

Some of the functional areas of some companies that have automated to reduce operating costs include customer support and sales.

The patient is considered as the environment computer keyboard is used as the sensor that receives data on the symptoms of the patient and the intelligent agent uses this information to decide the best course of action. Tests and treatments are given through actuators.

For a vacuum cleaner, the surface to be cleaned is the environment (e.g. Room, table, carpet).Using sensors employed in vacuum cleaning (cameras, dirt detection sensors, etc.)Senses the environment condition. Actuators such as brushes, wheels, and vacuum extractors are used to perform actions.

In autonomous driving cameras, GPS, and radar are employed as sensors to collect information. Pedestrians, other vehicles, roads, or road signs are the environment. Various actuators like brakes are used to initiate actions.